Had a ESXi PSOD today. That does not happened that often, so I was quite surprised to find out that it was not a hardware related issue that was the root cause.

VMware did an analysis of the memory dump, and it turned out to be a faulty driver. That made sense since the PSOD often comes from drivers og agents when it is not a hardware issue.

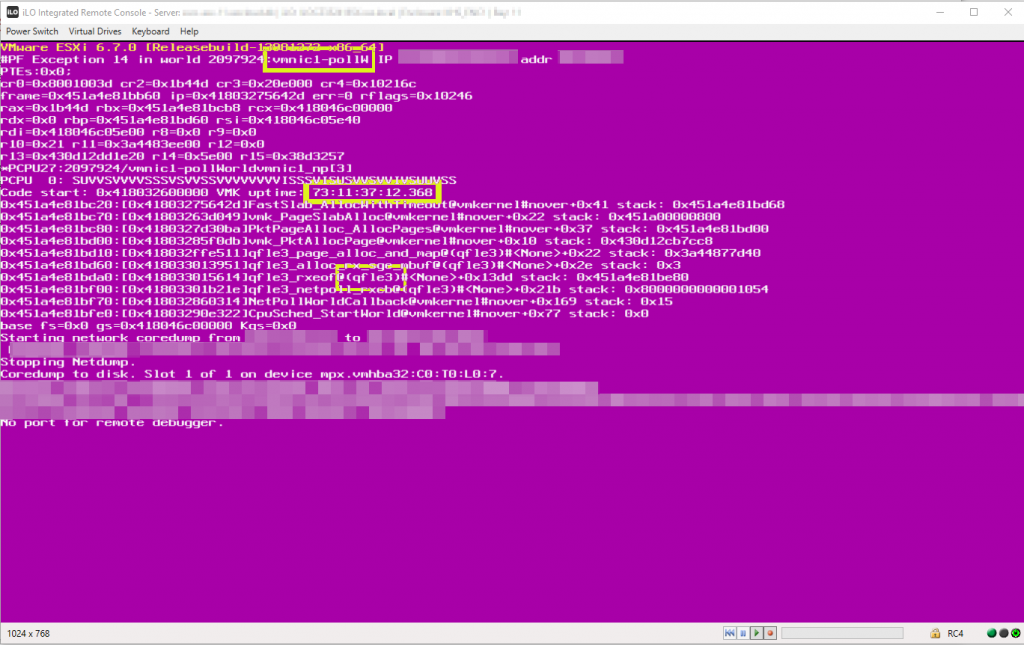

The PSOD i got was the following:

#PF Exception 14 in World xxxxxxx:vmnicX-pollw IP xxxxxxxxxx addr xxxxxxxx

Three things are worth noticing. First off the uptime of the server is more than 77 days. Initially I thought it might have been a memory leak, since no servers have had any issues for more than 77 days, and now servers started crashing. Initially we had two servers crash, but then we had a server crash that had just been rebooted! So we figured that was not the case.

Second the vmnicX-pollw was mentioned as the thing ESXi was working with at the time of the crash each time, and third the qfle3 driver is mentioned.

Turns out there is a VMware KB that might be relevant to this problem: https://kb.vmware.com/s/article/52044

The case mentions that during the upgrade from 6.5 to 6.7 the driver might change from bnx2x to qfle3 driver, and there is a way to switch back to the bnx2x driver.

#The commands to switch back to bnx2x driver is: esxcli system module set --enabled=true --module=bnx2x esxcli system module set --enabled=false --module=qfle3

After the commands are executed on the host, a reboot is needed.

This solution did not work in our case.

Read on…

This solution did not work in our case unfortunately. The bnx2x drivers was not compatible with our storage nics, so another solution was required.

Also HPE no longer supports that driver for certain network adaptors: https://support.hpe.com/hpsc/doc/public/display?docId=emr_na-a00044039en_us&docLocale=en_US

I our case we are using the adaptor type for both network, and FCoE storage, and there is something special to notice here. The 1.0.77.4 driver is unfortunatly not supported for FCoE, so it is a little unfortunate that HPE provides that driver using their vibsdepot, since you then cannot assume that your are in a supported state, you really have to pay attention and micro manage your drivers.

Here is the compatibility links for FCoE and Network.

If you are not using the adaptors FCoE you can use the newer version of the driver.

Go to the update section

To downgrade to the 1.0.69.1 version. You can download the driver from VMware. The driver can be downloaded here: https://my.vmware.com/web/vmware/details?downloadGroup=DT-ESXI67-QLOGIC-QCNIC-20647&productId=742

To install the driver I found that the easiest way was to unpack it and upload the offline bundle to the datastore, and then install it using PowerCLI after putting the host into maintenance mode.

Here are the commands needed to install the bundle:

$vmhost = Get-VMHost "<HostName>"

$esxcli = Get-EsxCli -VMHost $vmhost

$esxcli.software.vib.install("<datastore path>\driver-offline-bundle.zip>")

Afterwards a reboot of the host is needed.

I hope this helps you out. If it turns out that this problem persists, I will update this article with relevant information.

Please perform any operations seen in this article at your own risk.

Update

Sergei in the comments pointed me to this HPE advisory: a00077550en_us that states that you need to upgrade to 1.0.86.0, but also that the problem should only be with 1.0.77.2 drivers and older. That does not seem to be entirely correct since we had the problem with the 1.0.77.4 driver.

vSphere 6.5 Driver Download Link

https://support.hpe.com/hpsc/swd/public/detail?swItemId=MTX_d226367b959d46b1b0a58def77&swEnvOid=4234

vSphere 6.7 Driver Download Link

https://support.hpe.com/hpsc/swd/public/detail?swItemId=MTX_4cf6cf05e73b471c90cb91392f&swEnvOid=4196

I see qfle3 ver 1.0.77.2-1OEM.670.0.0.8169922 on an HPE Gen9 with 6.7u3. I wonder if this issue was fixed in later releases…

You will have to check the exact VID, DID, SVID and SSID of the card, and match them to the VMware Compatibility List.

The ones I have that are affected are:

VID : 14e4

DID : 16a2

SVID : 103c

SSID : 22fa

You can check them using the command:

vmkchdev -l |grep vmnic

Creds to Allan: https://www.virtual-allan.com/esxi-how-to-find-hbanic-driverfirmware-version/

We had also PSODs with qfle3 1.0.69 and 1.0.77.2 (when using iSCSI)

We switched to qfle3 1.0.84.0

3 months ago and experienced no crashes since then.

Huh. We are using FCoE, so we will see. I think it is a little frustrating that there is no KB dedicated to the bug.

What is the VID, DID, SVID and SSID of your cards?

we see similar bug

hpe advisory

Document ID: a00077550en_us

G10 HPE FlexFabric 20Gb 2-port 630FLB Adapter

upgrade to qfle3 driver version 1.0.86.0

Hi Sergei,

Thank you that is very useful. Odd that that article does not show up when googling the PSOD.

I have added the advisory to the article.

we had version

Driver Info:

Bus Info: 0000:37:00:0

Driver: qfle3

Firmware Version: FW: 7.13.109.0 BC: 7.15.24

Version: 1.0.77.4

and bug (a00077550en_us) reproduced

moreover we had another bug qfle3

Document ID: a00076313en_us

“may experience network communication loss during times of high volume network traffic received on the host”

We are using Dell PE R730 and got multiple ESxi hosts failure (PSOD) due to qfle3 driver. We are using latest firmware and drivers.

What was the fix for you?

I downgraded, since FCoE does not support a driver newer than version 1.0.69.1